Virtually try on clothes with Google’s new AI shopping feature

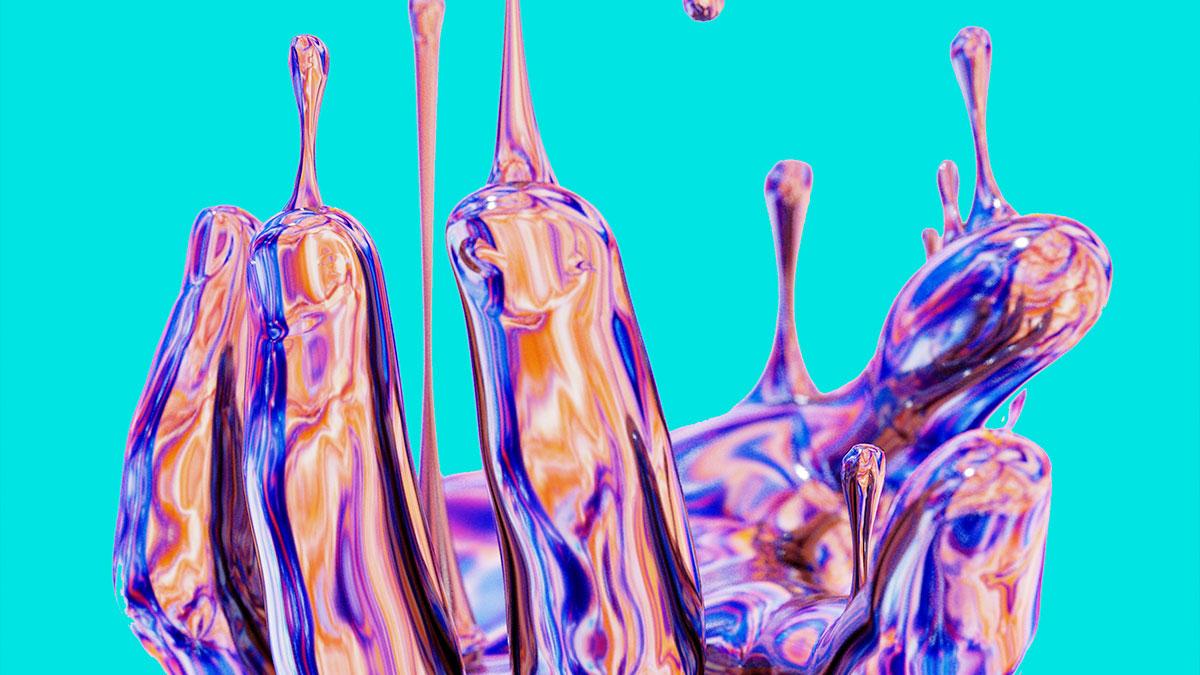

Virtual try-on for apparel shows you how clothes look on a variety of real models.

Here’s how it works: Our new generative AI model can take just one clothing image and accurately reflect how it would drape, fold, cling, stretch and form wrinkles and shadows on a diverse set of real models in various poses. We selected people ranging in sizes XXS-4XL representing different skin tones (using the Monk Skin Tone Scale as a guide), body shapes, ethnicities and hair types.

The feature has initially been launched in the US, allowing shoppers to virtually try on women’s tops from brands across Google, including Anthropologie, Everlane, H&M and LOFT. Just tap products with the “Try On” badge on Search and select the model that resonates most with you.

Working alongside our Shopping Graph, the world’s most comprehensive data set of products and sellers, this technology can scale to more brands and items over time. Look out for more options coming to virtual try-on for apparel, including men’s tops launching later this year.

Refine a product to find exactly what you want

Do you like that top, but want a less pricey version? Or this jacket, but in a different pattern? Associates can help with this in a store, suggesting and finding other options based on what you’ve already tried on. Now you can get that extra hand when you shop for clothes online.

Our new guided refinements can help U.S. shoppers fine-tune products until you find the perfect piece. Thanks to machine learning and new visual matching algorithms, you can refine using inputs like color, style and pattern. And unlike shopping in a store, you’re not limited to one retailer: You’ll see options from stores across the web. You can find this feature, available for tops to start, right within product listings.